In recent years the demand for accurately mapping the surrounding area of an object increased notably primarily due to the rise of of robotics and autonomous systems (e.g. self-driving cars). Other areas however, such as mapping hazardous or remote sites, detecting and analyzing changes in facilities or structures are actively researched as well, since advancements in these areas lead to safer working and living environments for countless people.

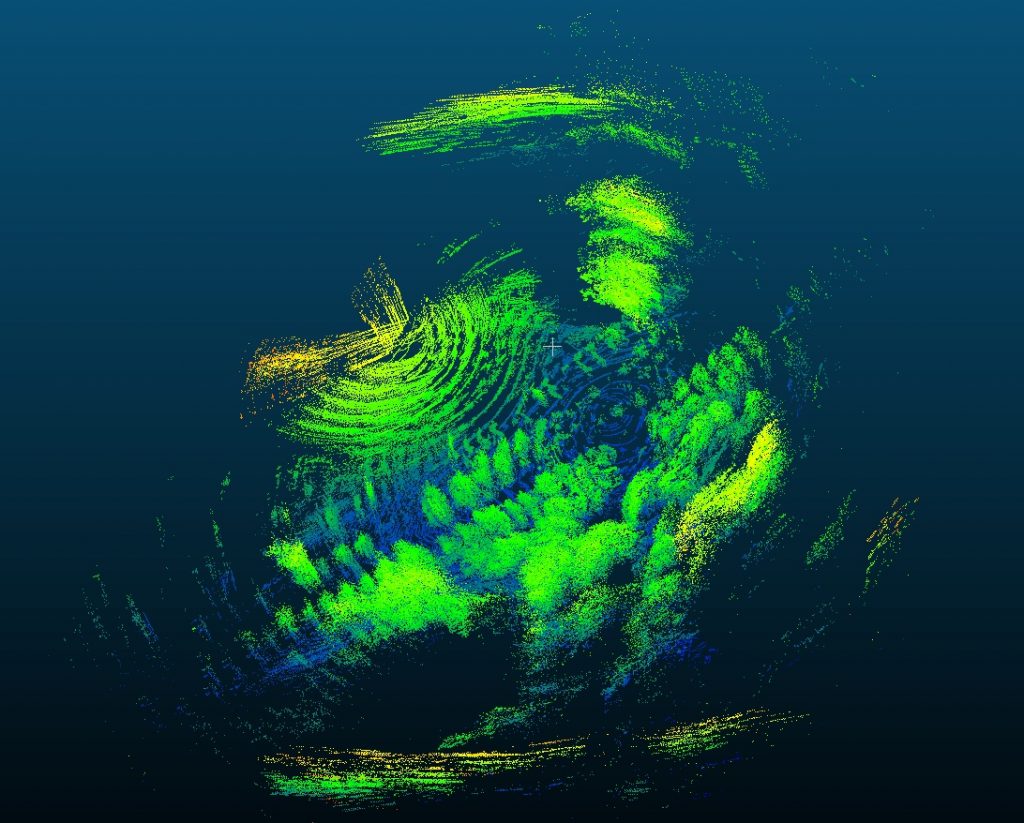

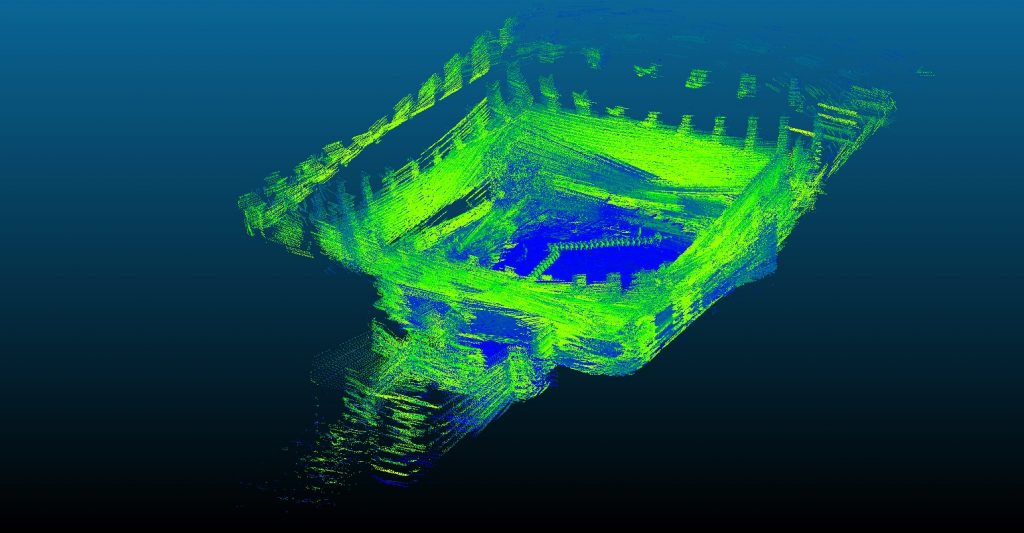

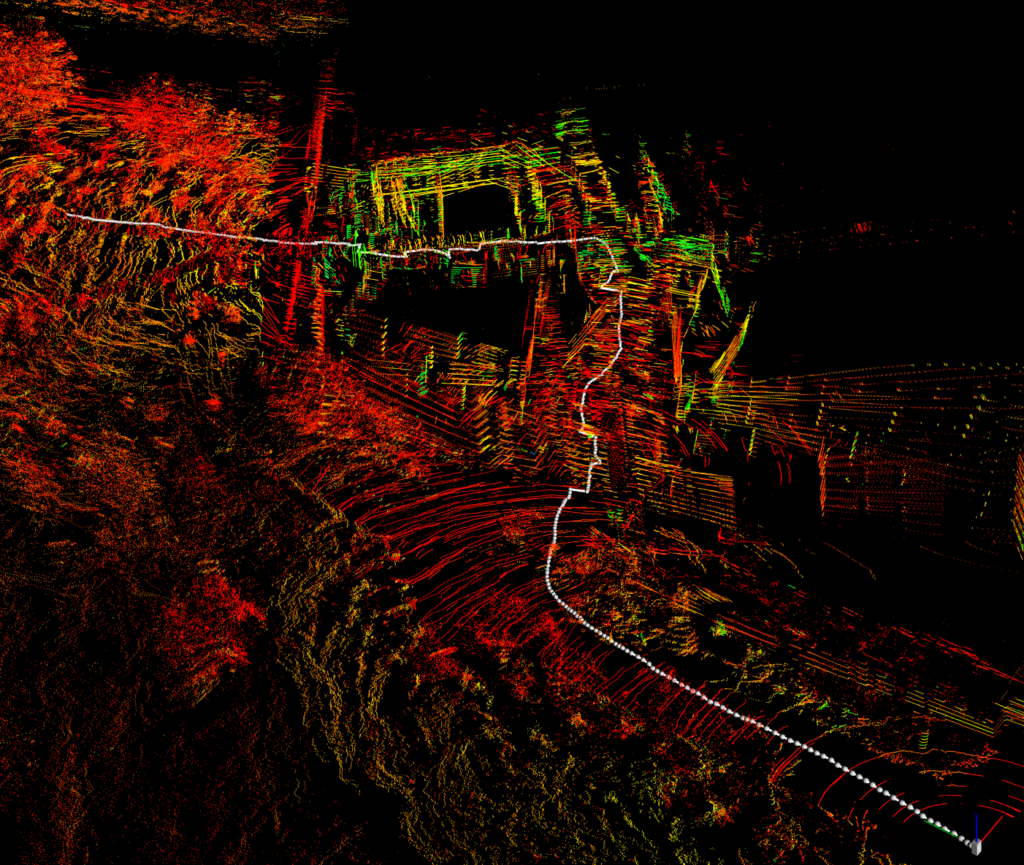

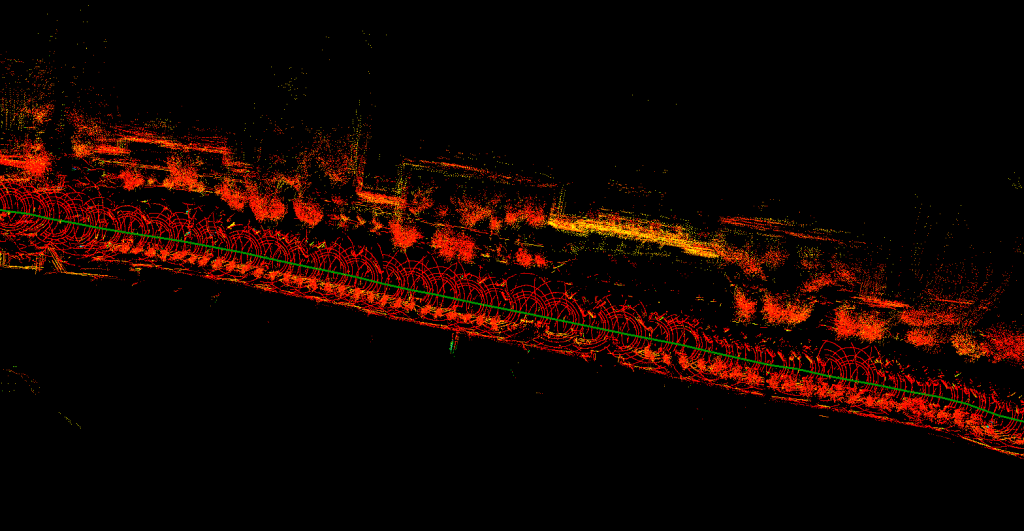

The goal of the project task is to create a software framework that is suitable for the dynamic positioning of a 3-dimensional point cloud created with a LiDAR sensor using sensor fusion (e.g. GNSS, IMU) and SLAM algorithms. The framework should be capable of combining different inputs in order to position the generated point cloud in 3D space with the highest possible accuracy in a completely unsupervised manner. With proper algorithms, the program should be able to weight the reliability of the used localization sensors and thus decide which sensor (or algorithm) will bring the best results. For example, a GNSS sensor can be used outdoors to achieve a higher degree of accuracy, while SLAM algorithms can give better results indoors.

The implementation is done in C++ using the Point Cloud Library (PCL) software library.

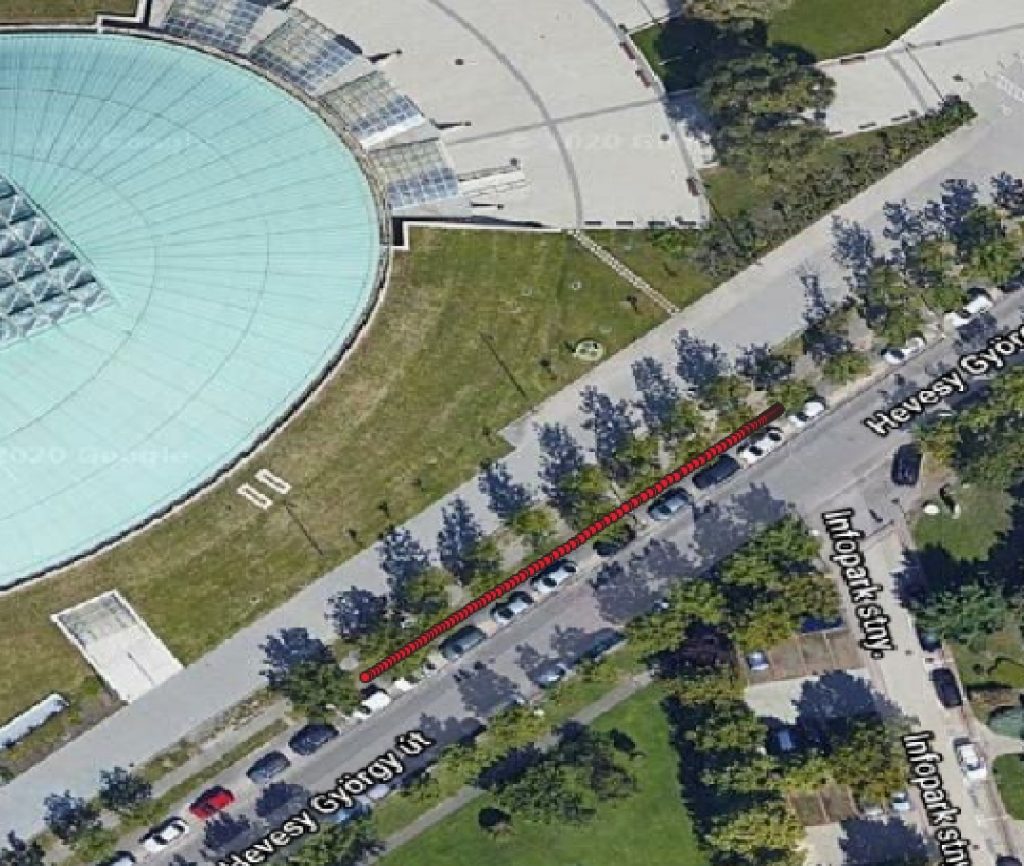

The research also includes practical testing and data collection, for which the Faculty has the following tools:

- Velodyne VLP-16 LiDAR sensor;

- Raspberry Pi 4 + BerryGPS-IMU GPS and IMU sensor;

- Stonex S9III Plus geodetic precision GNSS sensor;

- MediaTek MT3339 GNSS sensor;

- mobile phone GNSS sensor.

Source code availability

Public project (published developments): https://github.com/GISLab-ELTE/lidar-processor

University project (unpublished developments): https://gitlab.inf.elte.hu/gislab/lidar-processor

Publications

- Péter Farkas, Dominik Jámbor: LiDAR point cloud positioning using sensor fusion, TDK thesis, 2022.

- Roxána Provender: Spatial localization of LiDAR point clouds by sensor fusion, MSc thesis, 2020.